If you’ve ever tried to explain Paleo to a skeptic, you’ve probably encountered this argument. It’s not usually the first objection, but somewhere after “…not even whole wheat?” and “but doesn’t saturated fat give you heart disease?” comes “and didn’t cavemen all die when they were 25? Why would you want to eat like that?”

Statistics 101: Average vs. Mode

The argument that Paleo must be unhealthy because “the average caveman only lived to be 25” is a perfect example of how easy it is to draw false conclusions from true statistics. Consider the two statements below.

- The average caveman lived to be 25.

- The average age of death for cavemen was 25.

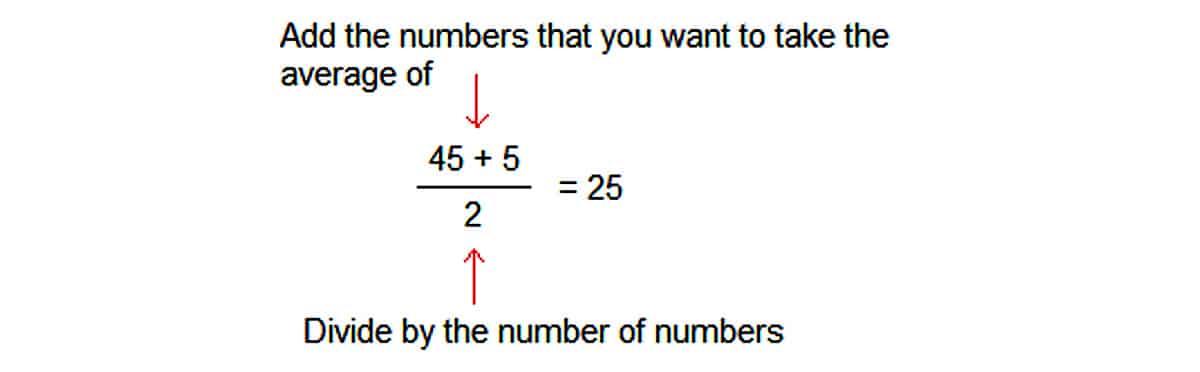

The first sentence describes a cultural norm, using average to mean “normal” or “typical.” According to the first statement, Joe Caveman born during the Paleolithic could reasonably plan on living to be 25, but not much older. The second statement also uses the word “average,” but with a very different meaning. Here, the word describes a mathematical formula: you find the average of a group of numbers by adding all the numbers up and dividing by the number of numbers. For example, if Joe Caveman lived to be 45, but his brother Jim Caveman got tetanus and died at age 5, you can find the average lifespan of the two brothers:

Notice how the average lifespan of the two brothers isn’t even close to the actual lifespan of either of them.

Many people have a false understanding of actual Paleolithic life expectancy because they confuse these two meanings of the word “average.” In the cultural sense, meaning “normal” or “typical,” the word “average” means something close to the mathematical definition of mode, or the most common number in a set. This leads many people to read statistics about the “average” and think it means the mode. But this can be very misleading: the mathematical average of a set of numbers can be the same as the mode, but it doesn’t necessarily have to be – as Joe and Jim Caveman above demonstrate.

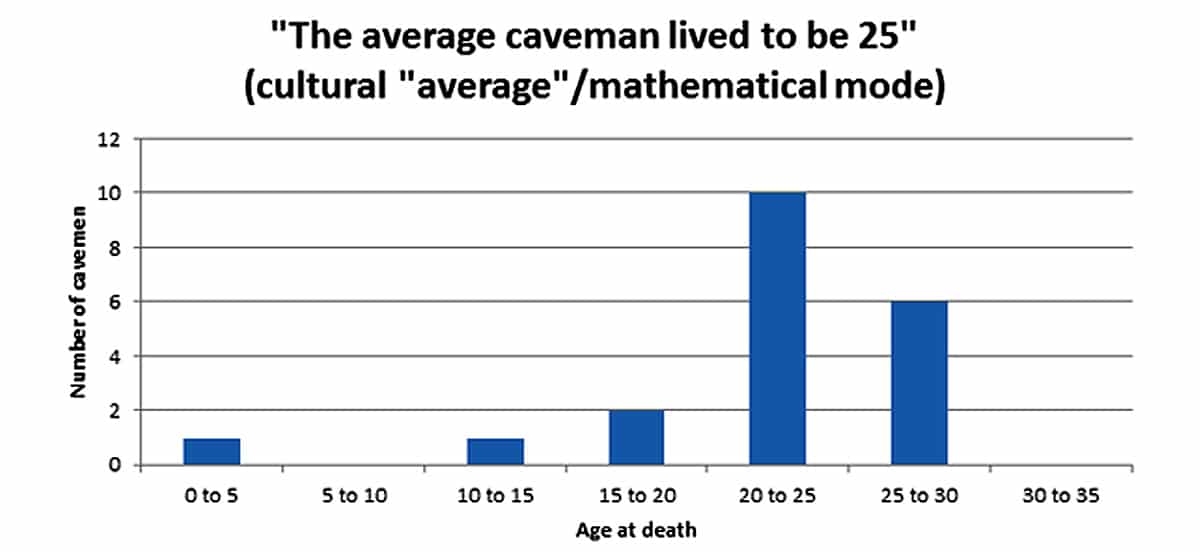

In a mathematical sense, as in the second statement, the average life expectancy of Paleolithic humans might well have been 25. However, this does not necessarily mean that the mode of the lifespans was 25. To illustrate the difference, the charts below show the life expectancy patterns that each of the two sentences above describe, projected onto a theoretical group of 20 cavemen.

In this graph, most cavemen are dying at around 25 – there are a couple outliers, but Joe Caveman born into this group could expect to live around 25 years. The mode (most common) age at death is the age 20-25 group. The mathematical average (found by adding up all the ages at death and dividing by 20) is also close to 25.

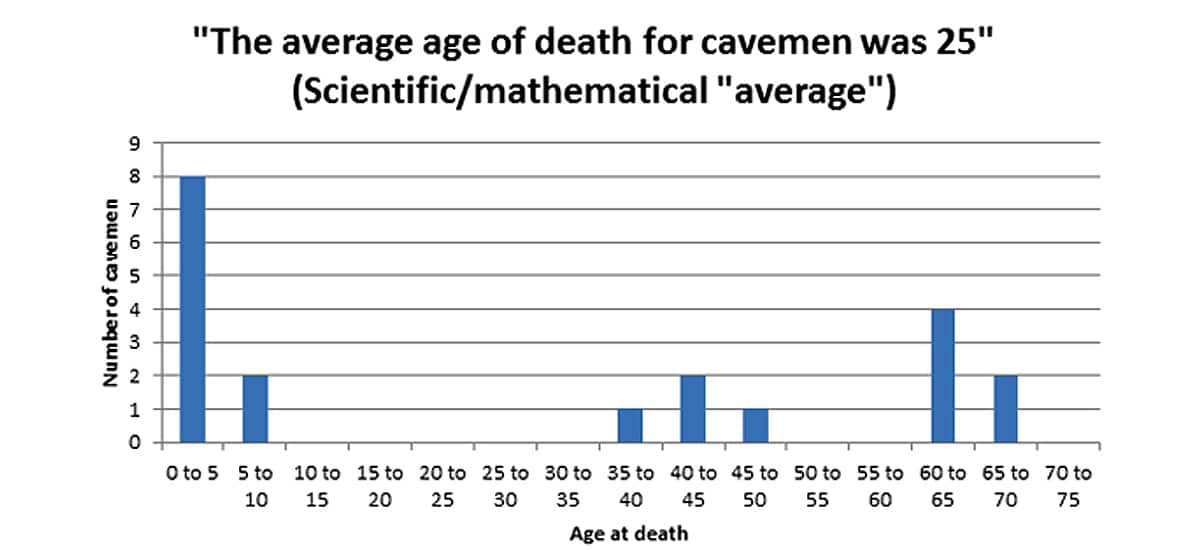

Taking the mathematical average of the ages at death of all the cavemen in this group would yield a number around 25, but that doesn’t necessarily mean that most of them – or even any of them – actually died at 25. The mode, in other words, is not necessarily the same as the average: in this graph, the average is around 25, but the mode is the age 0-5 group, and the range of lifespans varies widely with two different “typical” patterns: death in childhood, or survival into middle age. If Joe Caveman born into this tribe survives to his 10th birthday, he has a fighting chance of living to meet his grandchildren.

The second graph is close to what anthropologists mean when they talk about the “average life expectancy” of prehistoric humans being 25. This is why “average” as a mathematical concept can be so misleading: a heavy bias to one end of the data set can distort the average so dramatically that it no longer tells us anything meaningful about the lives of actual Paleolithic humans.

Infant Mortality and the Paleolithic Lifespan

The factor skewing the “average” data and giving rise to the false assumption that cavemen died very young is the infant mortality rate. This is difficult to determine using records from actual Paleolithic populations. While researchers have attempted to determine the age at death of Paleolithic humans by analyzing bone remains, these procedures measure Paleolithic bones against modern bones, not necessarily a relevant comparison since Paleolithic humans got much more Vitamin D than modern humans, and while their food supply was not as abundant, it was also uncontaminated by modern chemical toxins. Evidence from the Paleolithic is patchy enough that research on modern hunter-gatherers probably provides more accurate estimates: one study on found that 30-40% of hunter-gatherer children die before age 15, most of those before age 5. Another study reached an even more depressing conclusion: about 43% of modern hunter-gatherer children never reach their 15th birthday (although the rates of survival were higher among forager-horticulturalists and acculturated hunter-gatherers, who had access to some modern medical technology). Children were especially vulnerable to all kinds of diseases and infections that we can now prevent with vaccines or cure with antibiotics: due to the incredible advances in modern medicine, the child mortality rate in the United States today is 0.639%. Paleolithic child mortality is almost unimaginable to us.

This high infant mortality rate pulls down the “average age of death,” making it a largely meaningless statistic for anyone trying to figure out what life was actually like in the Paleolithic. One “average” number is convenient to quote at dinner-table arguments, but breaking down the population into age groups provides a much clearer and more accurate picture.

So what were the mortality patterns of prehistoric humans? From studying the remains of Paleolithic cultures and the life patterns of modern hunter-gatherers, researchers have concluded that human mortality fit to a U-shaped curve. Infancy and childhood were dangerous, but if you survived to 15, you could expect a reasonable lifespan: mortality rates started to increase again at around 40, doubling at 60 and again at 70. Gurven and Kaplan found that the modal (most common) age of death for hunter-gatherers who survived past 15 was 72. Taking out the infant mortality rate, Stephen Guyenet found that the average lifespan of one Inuit group was 43.5, with 25% of the population living past 60. One study on the Hazda exhaustively ruled out all modern circumstances as significantly influencing mortality, and concluded that humans evolved for a postreproductive lifespan of around 20 years.

While the specific estimates vary, none of these estimates come close to 25 as the typical age at death. Also interesting are the causes of death among modern hunter-gatherer populations. Few people suffer from diabetes, heart disease, and other degenerative diseases of the modern world; the most common killers are diseases that modern medicine has rendered much less dangerous, especially gastrointestinal and respiratory diseases. The basic pattern of Paleolithic mortality was probably close to these observed patterns in modern hunter-gatherer societies: a shockingly high infant mortality rate, but a relatively high life expectancy for those who survived to reach puberty, with most deaths caused by diseases that pose relatively little threat to people in modern societies.

Reproduction and Population Growth

This argument is not simply a set of statistics derived from studies on modern hunter-gatherers and extrapolated to the Paleolithic on the assumption that living conditions would be essentially similar. It also has a basis in human biology, specifically reproductive biology. Assuming that a Paleolithic woman wanted to maximize her baby’s chance for survival, she probably would have breastfed it for at least 2 years. This means that children would have been spaced at least 3 years apart: 2 years of breastfeeding plus 9 months of pregnancy. Studies on modern hunter-gatherers show women reaching menarche at an average age of 16, and giving birth to their first child around 19 (Hoggan uses 13, but this age is common only among modern industrial societies, where increased food intake and better nutrition have been steadily lowering the age of menarche since the 19th century. The average age at menarche for modern hunter-gatherers seems a much more accurate estimation for a Paleolithic woman). This means that the average woman would have Child 1 at 19, Child 2 at 22, and Child 3 at 25 – and then, according to the “cavemen died young” theory, she would die. But this is a completely unsustainable population pattern. Statistically, if 30-40% of children died in infancy, at least one of these children would die no matter what the mother did. Say that child is Child 1. The woman is now left with 2 children, but if she dies when Child 3 is a newborn, Child 3 will never get the benefits of her breast milk and nurture, and is therefore very unlikely to survive. Child 2 might stand a chance of living to adulthood, but if only one child per woman survived to reproduce, the human population would quickly die out.

Moreover, a woman very possibly might have not become pregnant with each successive child as soon as she was finished breastfeeding the last one. She may have chosen not to have sex, or a scarcity of food might have triggered her body into starvation mode, shutting down her reproductive system and suppressing fertility. If a woman only managed to bear 2 children before age 25, the population model gets even more dismal.

On top of the mathematical improbability of this view, evolutionary biology presents another obstacle in the form of menopause. Nothing evolves without some purpose. Humans would not randomly start to grow horns or to see in the ultraviolet spectrum without some pressing environmental need for those features. Likewise, we wouldn’t have evolved to go through menopause unless it brought some evolutionary advantage. The “grandmother hypothesis” proposes that older women, who are more likely to die in childbirth and have babies with birth defects, provide more benefit to their family group by investing their time and energy in the children they already have. Menopause protects older women from pregnancies they can’t physically handle, so that they can continue to support their offspring and ensure the survival of their genetic line. But menopause never would have evolved if women in the Paleolithic were dying at 25: there would be no evolutionary pressure for a change that occurs between 40 and 50.

Conclusion

The evolution of menopause and the mathematical impossibility of population growth are two gaping holes in the hypothesis that Paleolithic humans died at 25. Available data from modern hunter-gatherer societies also contradict the hypothesis that prehistoric humans all died very young: members of these groups who live to reach puberty have life expectancies between 60 and 70 years.

Many people misunderstand these patterns because they only look at the “average” lifespan. The mathematical average of the ages at death of everyone in a Paleolithic group might have been 25, but breaking down this number by age reveals a very high infant mortality rate bringing down the group average: either you died at 3, or you lived to be 60. The much higher average life expectancies of the modern world are largely due to modern medical technology dramatically decreasing the rate of death in children under 10. Comparing the “average” life expectancies definitively proves that modern medicine is better than Paleolithic medicine – but it doesn’t tell us anything about diet.

Leave a Reply